Can We Trust Artificial Intelligence? Exploring Privacy and Control in a Digital Age

This piece looks at a question that is becoming more important than ever: not how powerful AI is, but whether it can be trusted. As AI tools become part of how we work, write, and think, we are also sharing more of our data, often without fully realizing it. The article explores what is really happening behind the scenes when we use AI, and why privacy is not just a setting, but part of how these systems are built. It looks at the tradeoff between convenience and control, and how much information we give away every time we interact with these tools.

3/23/20262 min read

The growing integration of artificial intelligence (AI) into our daily lives raises critical questions about trust and privacy. While AI tools offer unprecedented convenience, they also need a critical examination of how our data is collected, managed, and protected. This article explores the complexity of trusting AI systems, focusing not just on their capabilities, but more importantly, on the implications of data sharing inherent in their operation.

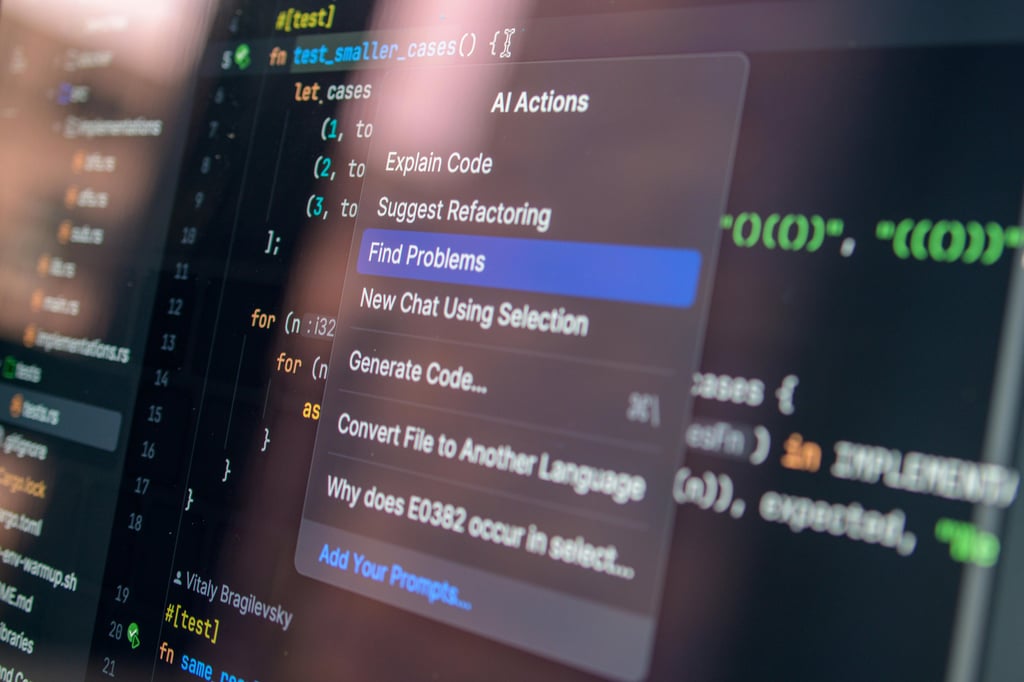

The Hidden Mechanisms of AI

Understanding AI requires a closer look at the mechanisms that enable these technologies to function. AI systems are trained on vast amounts of data, often sourced from user interactions. Consequently, every time we use AI tools, we provide data that helps refine these systems. This not only enhances AI's performance but also raises concerns about the extent of personal information that is being processed.

Privacy: A Fundamental Right or a Securable Setting?

Privacy is often perceived as just a setting we can toggle on or off. However, in the context of AI, it is an intrinsic factor deeply embedded within the architecture of these systems. By design, many AI tools collect user data to improve their functionalities. The tradeoff seems straightforward: we exchange personal information for enhanced convenience. Yet, this prompts a deeper inquiry. How much control do we truly retain over our data? Moreover, are we fully aware of the compromises being made in our quest for ease? The balance between convenience and control remains a pressing issue as AI integrations become more commonplace.

The Dilemma of Data Sharing

Each interaction with AI tools involves a conscious or unconscious decision to share data. What matters is understanding the implications of that choice. As data breaches and cyber threats continue to rise, the stakes of our digital interactions are higher than ever. While AI offers clear benefits in productivity and creativity, it is worth asking whether that convenience comes at the cost of losing control over our personal information. What exactly are we trading for efficiency, and can we truly hold these systems accountable for how they handle our data?

If you want to better understand which tools protect your data more effectively, you might be surprised. I will not give everything away here, but I recommend exploring the insights shared by cybersecurity expert María Aperador, who breaks down which AI tools offer stronger data protection and why. Her analysis challenges common assumptions and highlights options that prioritize user privacy. ChatGPT, along with privacy-focused tools like DuckDuckGo, may not be what you expect.

Conclusion

As AI continues to evolve, trust and privacy will become central to how we use these technologies. Access to information is no longer the problem; using it wisely is. Staying informed about how AI tools operate and how they use personal data is essential. As we navigate this increasingly complex digital landscape, recognizing the tradeoff between convenience and control will be key to shaping a future where AI can truly be trusted.

Updates

Get your tech and AI news summarized in minutes.

Alerts

© 2025. All rights reserved.